Group #21

-

David Dohan

-

Miles Yucht

-

Andrew Cheong

-

Shubhro Saha

Oz authenticates individuals into computer systems using sequences of basic hand gestures.

Links to previous blog posts:

Videos:

First trial: Video Demo

Sample use + voiceover:Video with voiceover

The key components of Oz are a webcam, browser plugin, and gesture recognition engine. We also use a black glove ease recognition in the prototype. When a user is on a login page (specifically Facebook for our prototype), they can select the Oz browser plugin and add a user to the system. After a user has been added, they may be selected in the plugin, and authenticated by entering their series of hand gestures. The chrome plugin uses a python script to handle acquiring and classifying the hand image from the webcam. The system is fully integrated into the browser.

Changes since P6:

-

Previously, all of the gesture acquisition was completed in Python code, as we thought that Oz would basically provide a simple API and take care of all of the image acquisition, simply handing the username/password (or a corresponding hash) to the browser. In this iteration, we developed a Chrome extension that dynamically interacts with the user and masks all of the low-level text interface that we used in P6.

-

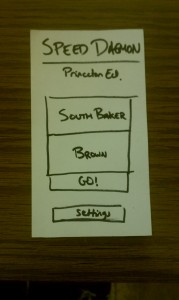

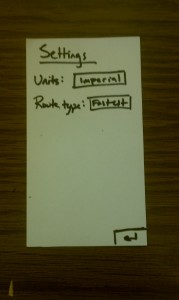

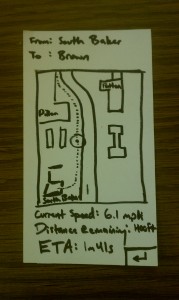

In order to make the browser plugin more interactive and responsive to the user, the gesture acquisition code is now done in Javascript in the plugin. This is the most visible change to the user. This involved a significant rewrite of our plugin/script communication architecture. The plugin includes interfaces to select the user, add new users, reset password, and login.

-

In moving user interaction into the plugin, we made several changes to the user interaction:

-

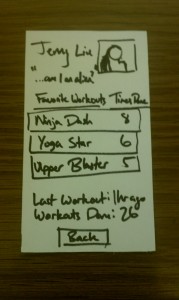

There is now explicit feedback to the user when a gesture is read in. This is indicated by a graphic similar to the following one:

-

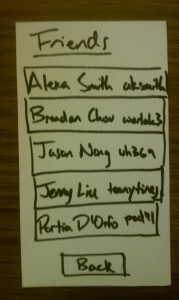

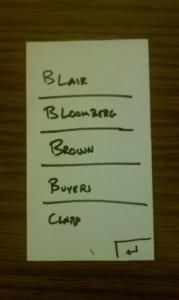

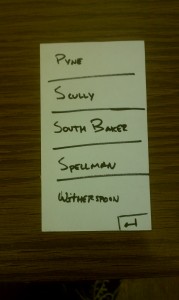

The user can select a recently used profile without any typing (by tapping it on a touch screen or clicking it with a mouse). This means there is no need for keyboard interaction in many cases.

-

-

We made additional changes that are less obvious on the user-facing side in security. We have modified our program so user information is encrypted using the series of gestures as a key for secure storage.

How our goals have changed since P1:

Over the course of the semester, we realized that although LEAP is a really cool and useful technology, it is rather tricky to use for hand pose recognition because it isn’t able to identify which finger is which, instead identifying fingers that are present and providing data about their position, width, length, direction, and other features. It was exceedingly difficult to accurately determine fingers based on just this information. Consequently, we moved from this approach to using a webcam, which provides much more raw data about the location of the hand, although only in 2 dimensions. However, using the OpenCV library, we were able to select out the hand from the image and pass the isolated hand features to an SVM. This produced much more reliable results and was much easier for us to use, with the downside of occasional confusion of similar gestures (for example, “peace-sign” vs “trigger”). However, by limiting the set of similar gestures and gathering a large amount of training data, the system now reports the correct gesture much more often.

Previously, we intended to incorporate facial recognition as a task completed by Oz during the user registration process. While the use of facial recognition is interesting and could be implemented for later versions of Oz, we as a group realized that acquiring training data and refining the hand gesture recognition algorithm was more significant. Since the current method of selecting the user profile accomplishes the same goal as facial recognition—minimizing keyboard interaction—we shifted our priorities away from facial recognition.

Critical evaluation of the project:

Obviously one of the goals of Oz is to supplant manual username/password entry with a keyboard, so in designing Oz, we must always be asking the question “How much does Oz improve the experience of logging into websites?” Heuristics to measure this include speed, reliability, and user enjoyment when compared to using the keyboard. Interestingly, though users seemed to have a difficult time using Oz, overall they rated the experience as highly enjoyable, perhaps due to the novelty of a contact-free gesture-based interface, even though Oz performed significantly more poorly than simply typing in a password. However, with further practice and refinement of gesture recognition, we suspect that it could easily compete with the speed that users enter in passwords using a keyboard, and if we were to include our original plans for facial recognition, the overall login process could possibly be faster than using the keyboard at all. However, any claim such as this needs to be verified through experiment before one can extrapolate to future performance of any product, Oz included.

The types of technology used in Oz are relatively inexpensive and widespread. We used a Microsoft Lifecam Cinema webcam in our prototype, but simpler, less expensive webcams would probably work just as well. The resolution of low- to mid-range webcams can probably resolve enough of the hand that they could work as a drop-in replacement for this webcam. Other materials used include a box and green paper (which are really inexpensive). Hence, from a cost-prohibitive standpoint, Oz (nearly) passes with flying colors; all that remains is to test it out with a lower cost webcam. That said, the actual apparatus box and all is rather bulky and at the moment remains somewhat nonintuitive for most users, as in, it doesn’t suggest the appropriate way to use the device, so different users made similar mistakes in using the device (one of the most common errors was the user not putting their hand far enough into the box).

Obviously, security is another serious concern when dealing with user credentials. There are two main fronts which Oz could be susceptible to attack: an attacker could either try to feign to be a user and imitate the hand gesture of another user, or he could also try to extract the password from the encrypted version stored in Oz. In the current implementation, the first method is actually quite feasible in the same way that an attacker could type in another user’s credentials to gain access to the user’s account, but with facial recognition to determine the user this becomes less trivial. As for the second concern, the current implementation of the program would be relatively easy for a skilled hacker to extract a password, but we saw the challenge in this project as building a device that interprets hand gestures, and making a working user authentication system is simply icing on the cake.

How could we move forward:

One of the biggest problems with Oz is the accuracy of recognizing gestures. One of the motivations behind Oz was that the space of hand gestures is larger than the space of characters in a password, but as we try to utilize more and more of this space, the error rate of misinterpreting a gesture as one nearby in the gesture space increases. For this to work as a reliable system, we need to ensure that this error rate is really low, so one step is to expand the set of usable, recognizable gestures while maintaining a low error rate. One possible way of addressing this issue is by using a depth camera, such as that in the Xbox Kinect camera. This would provide more information about the image to the SVM, and recent research in computer vision has made pose estimation using depth cameras more feasible for systems such as Oz. Another plausible technique is using multiple camera from different perspective instead of only a top down view. There are also many potential methods for choosing features to train on, but prototyping and testing each of these methods would have taken a prohibitively long time during the semester. Additionally, being able to take into account rotation and shadows in the Box would certainly enhance the accuracy of Oz, as would increasing the training data.

There are several other possible approaches to hand gesture authentication, each with its own set of tradeoffs on security versus robustness. One such approach is to store a series of hand pose skeletons for each password. When authenticating, Oz would check the total error between each gesture and the stored password. If the total error is sufficiently small, then the password is accepted and the user authenticated. Such a technique, however, doesn’t allow us to encrypt the user’s account passwords as we do in our current implementation.

One related issue is that different people have different understandings of what a gesture is, having different ways of . We could certainly write a tutorial to show users how to make the different gestures, but we could augment this by creating a new classifier for each user and having each user train their own classifier. The advantage of this is that Oz would be tolerant to between-user variation in gestures, as each user would provide their own training data for Oz (rather than use a default SVM that is the same for everyone). This also would make the system more sensitive to biometric differences between users.

Source code:

The source code is available on Github at http://github.com/dmrd/oz.git. The link for the final commit is https://github.com/dmrd/oz/commit/f252e70a5a434aeca28509e8d3dea9087b89ca84. If you really want a zip, you can get it at https://www.dropbox.com/s/9pmwwawrprnee4r/oz.zip.

Third-party code used:

-

The sklearn module: this module provides several machine-learning algorithms for use in Python. Specifically, we use the LinearSVC class which provides support-vector machine functionality.

-

The OpenCV (cv2)module: this module provides Python bindings to obtain images from the webcam and to preprocess those images in preparation for the machine learning step, including contour-finding and thresholding. Additionally, we use the numpy module because opencv internally represents arrays as numpy objects.

-

The slurpy module: this module provides a method for registering Python functions with and instance of a slurpy server which can then be called as normal functions from javascript. We used this module to allow our Chrome plugin, which in written in Javascript, to call the gesture recognition code written in Python.

Links to printed materials at Demo day

https://www.dropbox.com/s/po5wnbmnxu0g6qv/poster.jpg is the link to our poster.