Brisq: P4

The Cereal Killers, Group 24

Group members: Bereket, Ryan, Andrew, Lauren

Brisq is a special gesture control bracelet, that allows you to perform user set functions on your laptop with simple arm motions.

Testing Methods

Consent Script:

This device is meant to allow “hands-free” usage of your computer, allowing you to perform some pre-determined functions on your laptop with simple motions. To participate in this study, you will be required to perform a simple task while wearing a small bracelet. Several computer functions you wish to perform will be done by a live assistant, in place of our (non-working) prototype. Do you consent to perform an experiment with our prototype? Do you consent to be videotaped. The video of you will be used solely for our project and will not be distributed or sold. Would you like to remain anonymous?

Participants

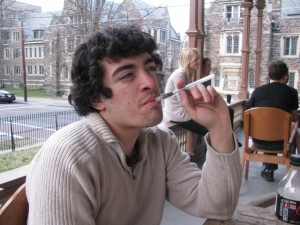

We chose our participants to fit each task group as well as possible. The TV user was chosen because he watches TV and movies from his computer a lot. The cooking user was chosen because he is a member of a vegetarian food co-operative, and therefore cooks for several people twice a week. The final user currently is handicapped by a collarbone injury, and therefore perfectly fits the our model for a disabled computer user.

Environment

Our prototype consisted of a simple bracelet and a human assistant to perform the gestures. The testing environment was chosen to match the task. For example, the cooking task took place in the coop kitchen and the TV task happened in the Quad TV room. The only equipment was a laptop to perform the computer functions.

Procedure

One member of the team was the assistant who watched for the gestures and inputted them into the computer. Another member read the consent and demo scripts. That member, in addition to a third member, would observe the scene and take notes. Finally, a fourth member would take photos or video.

Link for [Demo Script] and [Task Script]

Disabled Video

Results Summary

Everyone seems to like the device, and we received satisfaction numbers of 4, 4, and 5. The range of prices people were willing to pay for our device was between $15-50. In some cases, users had trouble coming up with computer commands they wanted to map to gestures. The disabled user case was probably the most difficult because the user needed to use the entire range of computer functions, not just play/pause or scrolling. All of the users were not put off by the potential requirement to have special start and stop gestures to initialize their commands. Users did not think that they would wear the bracelet at all times, only carry it around and wear it when they needed it. Finally, participants all had interesting ideas for the design of the device, including watches and rings.

Discussion

Because participants had trouble picking functions, we could suggest some of them to maximize their experience. For example, our disabled user had a great idea to gesture for shift and ctrl while his good hand completed the click combo. We could also have a few simple, easy to remember gestures that were pre-programmed, to help people overcome the steep learning curve. Our cooking user made a good comment about how his desire to use the product depends on how well the sensor was calibrated (thus how well we could detect his gestures). Thus, our primary focus should be the machine learning algorithm. Also, our algorithm would be much more robust if we were able to use a list of pre-programmed, instead of user defined, gestures.

Test Plan

We wish to continue testing in order to answer two important and highly specific questions. The first one is how to enter gesture recognition mode. We want the method to be both convenient and unobtrusive. The second question is whether to have a series of pre-programmed gestures for users to map commands to, or to have user-defined ones. The advantage of pre-programmed gestures that we foresee is that it would create a simple way to get started with the device, and would provide a way to avoid the issue of users creating overly-complex gestures that are both hard for them to remember, and hard for the algorithm to recognize.