Group # 21 : Dohan Yucht Cheong Saha

-

David Dohan

-

Miles Yucht

-

Andrew Cheong

-

Shubhro Saha

Oz authenticates individuals into computer systems using sequences of basic hand gestures.

Test Method Description

Click here to view our informed consent document. We obtained informed consent by having prospective participants read our informed consent document. Then we orally reiterated two of the most important points of the document: that the participants may stop at point in the study if they wish, and we check whether they are OK with being photographed and/or videotaped during the study, including identifiable features like their face.

We selected our participants from the Princeton student body around Frist Campus Center. We had two male students and one female students. One of the students also happened to be left-handed.

The tests took place in Frist Campus Center, just footsteps away from the television-viewing area. We set up a laptop computer on a folding table across from the study booths, then asked passersby if they would be willing to participate in a 5-minute study. Our LEAP motion device was placed inside a small cardboard box situated within hand’s reach next to the laptop.

Click here to view the demo script. Shubhro Saha began the our prototype testing by prompting the users to the informed consent script. After obtaining consent, Shubhro then explained the Oz prototype to the user and the functionality that this product entails. After this brief introduction, Andrew Cheong instructed the participants for each task they completed. The first task that Andrew prompts the user for is the profile selection with a following handshake. The next task was the facial recognition followed by the handshake. The last task asked for the user to reset their handshake by following the interface’s provided instructions. As the participants interact with Oz, Shubhro served as the interactive interface. David Dohan was in charge of recording, videotaping, and taking photos of the testing. Miles Yucht was the scribe and recorded user’s interactions, responses, questions, and behaviors during the testing procedure.

Results

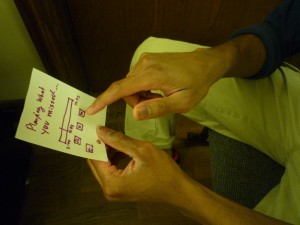

Our first test subject was a left-handed male student. He was initially confused by the paper prototype and was unsure whether to simulate using a mouse or tap the prototype. After a brief explanation, however, he had no problem using the rest of our interface to select a user profile and enter his handshake without additional prompting. He also has no problems using facial recognition to select a profile (except for confusion that he needed to select “Login with Handshake” to initiate it). At the end of logging in, our subject said “Nice! That was easy!” He also had no trouble going through the handshake reset.

Our second test subject was a right-handed male student. He expressed great confusion when prompted to tap the interface. After minor guidance he understood that this prototype was entirely touch-oriented and completed the following tasks easily. He later explained that he would be very willing to try such a product in the future.

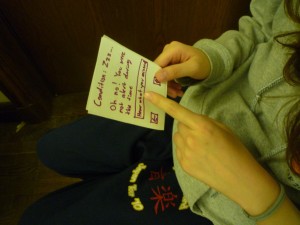

Our third subject was a female student, and seems to have tried entering her hand gesture at the user profile selection instead of when prompted to do so in the subsequent screen. The test subject smiled with a sense of accomplishment when the second task was completed.

First subject conducts handshake.

Third user logs in through Oz.

Discussion

Problem: Subjects misunderstood touch interface. Rating 3

Our results are, for the most part, quite straightforward. Every one of our test subjects successfully authenticated with the Oz handshake system. A common theme across the participants was confusion regarding “tapping the screen” to proceed through the study. We believe this confusion is characteristic only of our paper prototype and would not impact our final system. This is because, in the real environment, touch or mouse-based interaction would be as familiar since these systems are ubiquitous today. One area where the test might have been improved is in not glossing over the email confirmation link sequence for the password reset task. We felt that included the email screen in the paper prototype would be a distraction from the focus of this study, and we do not foresee any problems in this area. Additionally, we should test the usability of initially creating a handshake. This will include verifying the handshake entered during the reset process is correct by having them re-enter it.

Subsequent Testing / High Fidelity Prototype

Despite the touch screen interface confusion, we believe that we are ready to construct a higher-fidelity prototype. Our friends, classmates, and subjects have all expressed interest in an alternative method of authenticating their computer accounts. Because the Oz product is validated primarily through the actual technology (verifying actual hand gestures), implementing a higher-fidelity prototype is necessary. The current interface and design itself appears to be easy to navigate by random users which suggests that no major changes are necessary to the prototype. Further useful information such as how competitive this method is versus typing in a password requires testing with working hardware to record data about entry times and error rates.