By Marisa Sanders for the Office of the Dean for Research

A new study by researchers at Princeton University suggests that sporadic bursts of gene activity may be important features of genetic regulation rather than just occasional mishaps. The researchers found that snippets of DNA called enhancers can boost the frequency of bursts, suggesting that these bursts play a role in gene control.

The researchers analyzed videos of Drosophila fly embryos undergoing DNA transcription, the first step in the activation of genes to make proteins. In a study published on July 14 in the journal Cell, the researchers found that placing enhancers in different positions relative to their target genes resulted in dramatic changes in the frequency of the bursts.

“The importance of transcriptional bursts is controversial,” said Michael Levine, Princeton’s Anthony B. Evnin ’62 Professor in Genomics and director of the Lewis-Sigler Institute for Integrative Genomics. “While our study doesn’t prove that all genes undergo transcriptional bursting, we did find that every gene we looked at showed bursting, and these are the critical genes that define what the embryo is going to become. If we see bursting here, the odds are we are going to see it elsewhere.”

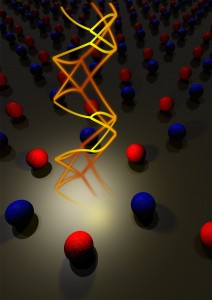

The transcription of DNA occurs when an enzyme known as RNA polymerase converts the DNA code into a corresponding RNA code, which is later translated into a protein. Researchers were puzzled to find about ten years ago that transcription can be sporadic and variable rather than smooth and continuous.

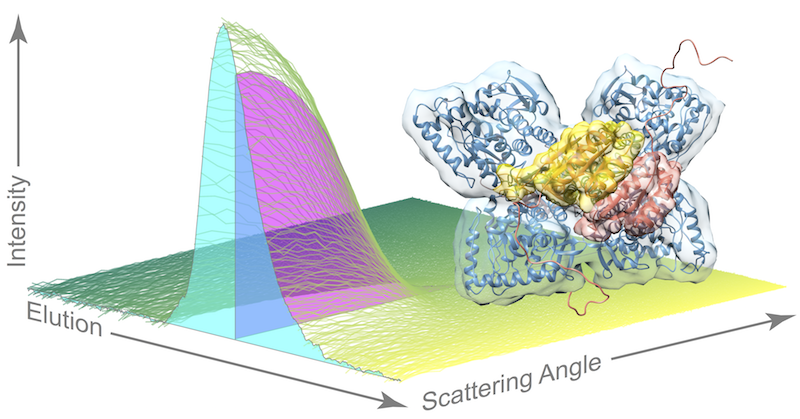

In the current study, Takashi Fukaya, a postdoctoral research fellow, and Bomyi Lim, a postdoctoral research associate, both working with Levine, explored the role of enhancers on transcriptional bursting. Enhancers are recognized by DNA-binding proteins to augment or diminish transcription rates, but the exact mechanisms are poorly understood.

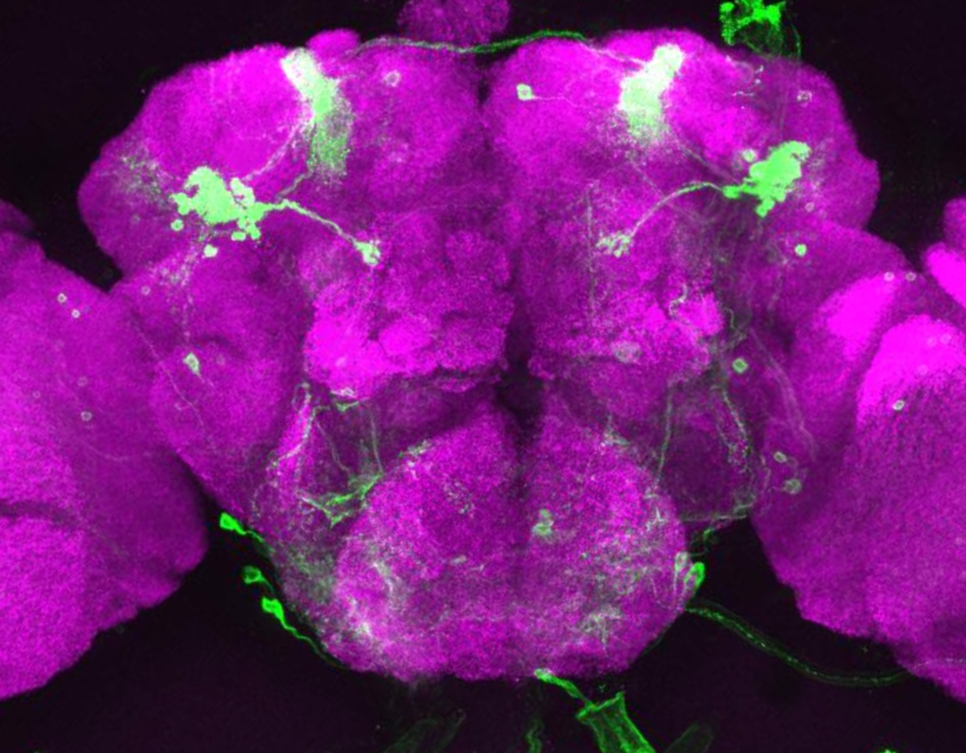

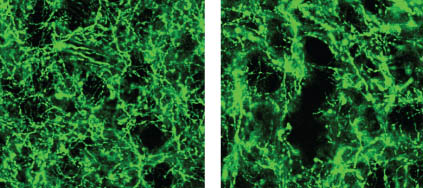

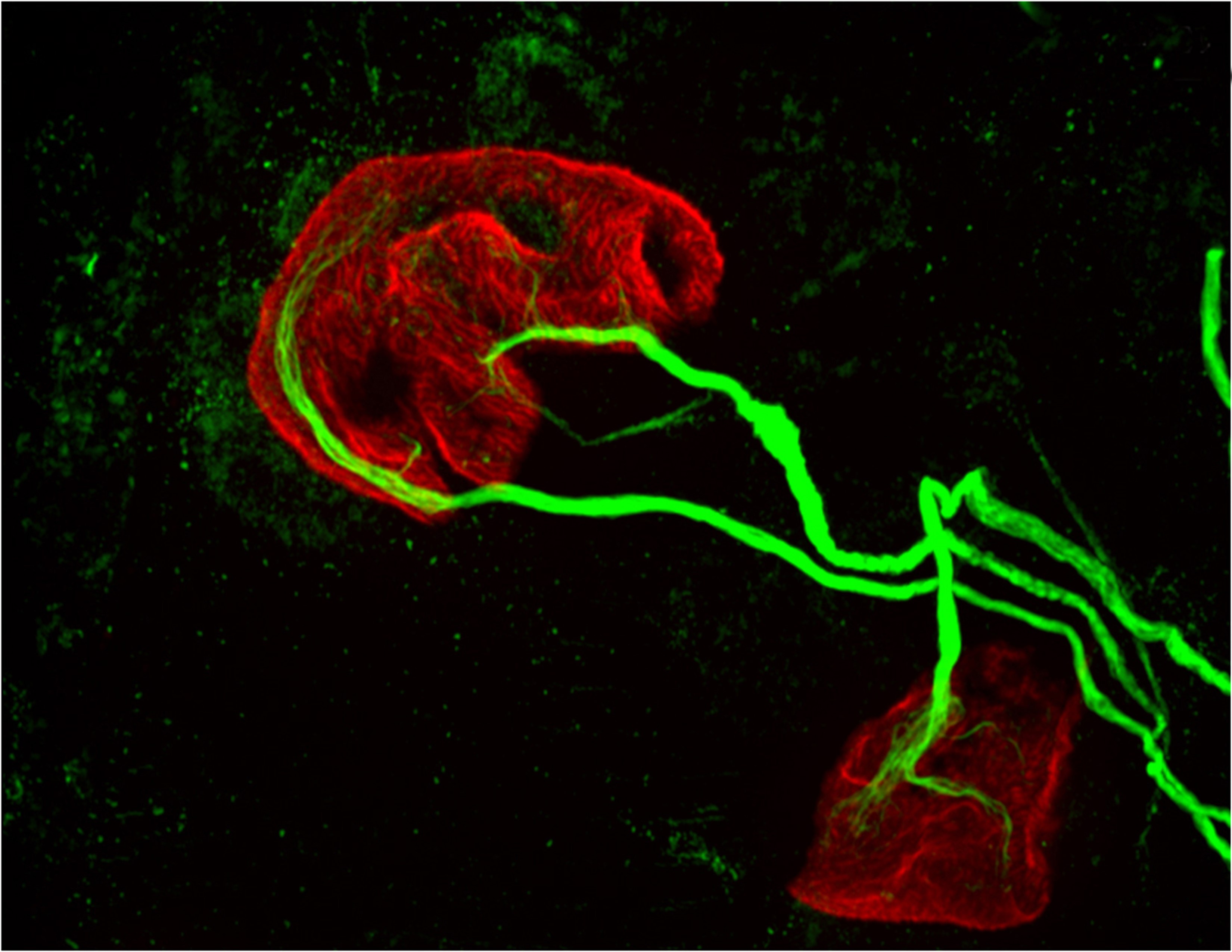

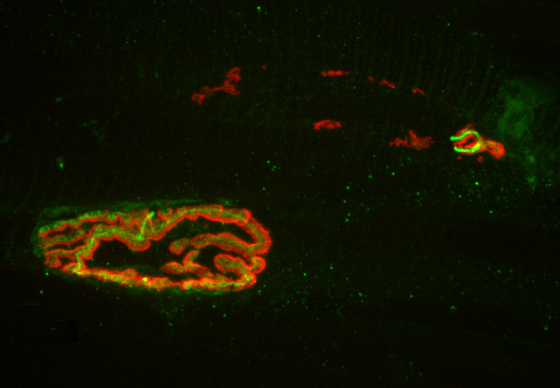

Until recently, visualizing transcription in living embryos was impossible due to limits in the sensitivity and resolution of light microscopes. A new method developed three years ago has now made that possible. The technique, developed by two separate research groups, one at Princeton led by Thomas Gregor, associate professor of physics and the Lewis-Sigler Institute for Integrative Genomics, and the other led by Nathalie Dostatni at the Curie Institute in Paris, involves placing fluorescent tags on RNA molecules to make them visible under the microscope.

The researchers used this live-imaging technique to study fly embryos at a key stage in their development, approximately two hours after the onset of embryonic life where the genes undergo fast and furious transcription for about one hour. During this period, the researchers observed a significant ramping up of bursting, in which the RNA polymerase enzymes cranked out a newly transcribed segment of RNA every 10 or 15 seconds over a period of perhaps 4 or 5 minutes per burst. The genes then relaxed for a few minutes, followed by another episode of bursting.

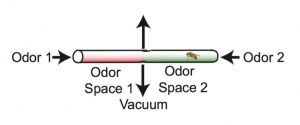

The team then looked at whether the location of the enhancer – either upstream from the gene or downstream – influenced the amount of bursting. In two different experiments, Fukaya placed the enhancer either upstream of the gene’s promoter, or downstream of the gene and saw that the different enhancer positions resulted in distinct responses. When the researchers positioned the enhancer downstream of the gene, they observed periodic bursts of transcription. However when they positioned the enhancer upstream of the gene, the researchers saw some fluctuations but no discrete bursts. They found that the closer the enhancer is to the promoter, the more frequent the bursting.

To confirm their observations, Lim applied further data analysis methods to tally the amount of bursting that they saw in the videos. The team found that the frequency of the bursts was related to the strength of the enhancer in upregulating gene expression. Strong enhancers produced more bursts than weak enhancers. The team also showed that inserting a segment of DNA called an insulator reduced the number of bursts and dampened gene expression.

In a second series of experiments, Fukaya showed that a single enhancer can activate simultaneously two genes that are located some distance apart on the genome and have separate promoters. It was originally thought that such an enhancer would facilitate bursting at one promoter at a time—that is, it would arrive at a promoter, linger, produce a burst, and come off. Then, it would randomly select one of the two genes for another round of bursting. However, what was instead observed was bursting occurring simultaneously at both genes.

“We were surprised by this result,” Levine said. “Back to the drawing board! This means that traditional models for enhancer-promoter looping interactions are just not quite correct,” Levine said. “It may be that the promoters can move to the enhancer due to the formation of chromosomal loops. That is the next area to explore in the future.”

The study was funded by grants from the National Institutes of Health (U01EB021239 and GM46638).

Access the paper here:

Takashi Fukaya, Bomyi Lim & Michael Levine. Enhancer Control of Transcriptional Bursting, Cell (2016), Published July 14. EPub ahead of print June 9. http://dx.doi.org/10.1016/j.cell.2016.05.025

You must be logged in to post a comment.